Most test automation still requires a human to write every script, maintain every selector, and decide what gets tested. Autonomous testing refers to something different: software testing where AI handles the generation, execution, and analysis of tests without step-by-step human instruction.

That’s a meaningful distinction. Traditional test automation automates the execution of tests a human designed. Autonomous testing uses AI to handle parts of the thinking what to test, how to test it, and what the results mean.

It’s an emerging approach. Fully autonomous testing across a complex production system isn’t here yet. But the components exist today, teams are combining them now, and the testing process is already changing as a result.

What Autonomous Testing Actually Means

Autonomous testing is a software testing approach that uses AI models, agents, and automated tooling to perform testing activities with minimal human intervention. Depending on how it’s implemented, it can cover:

- Test generation — AI creates test cases from requirements, user stories, or existing code

- Test execution — agents run tests, navigate interfaces, and interact with APIs without hardcoded scripts

- Result analysis — AI interprets failures, distinguishes real bugs from flaky tests, and logs defects

- Test maintenance — when UI or API changes break tests, AI updates them automatically

No implementation does all four at full autonomy right now. Most real-world autonomous testing handles one or two of these well and leaves the others partially manual.

The Architecture Behind Autonomous Testing

To understand how autonomous testing works, you need to understand three components and how they connect.

The LLM: The Reasoning Layer

A large language model (LLM) is the core intelligence. It generates test cases from natural language requirements, writes test scripts, analyzes error logs, and interprets what a test failure means in plain language.

On its own, an LLM has a significant limitation for testing: it knows nothing about your specific application. It can write generic test cases, but it can’t generate tests grounded in your actual endpoints, data models, or user flows without being given that context.

📝 Read also: Testing LLM Applications: A Practical Guide for QA Teams

RAG: The Context Layer

Retrieval-augmented generation (RAG) solves the LLM’s context problem. It connects the AI to your team’s actual knowledge Jira tickets, API specifications, database schemas, test coverage reports, requirements documents.

When a QA team feeds their documentation into a RAG system, the LLM stops generating generic tests and starts generating tests that reflect the real application. “Test the checkout flow” becomes test cases that account for your actual payment integrations, your specific edge cases, your real user data patterns.

AI Agents: The Execution Layer

The agent is what makes autonomous testing genuinely autonomous. An AI agent can use tools, run a test suite, submit a form, call an API, log a defect in Jira, trigger a CI/CD pipeline. The LLM reasons; the agent acts.

A simple example: the LLM generates a test scenario for a login flow. The agent opens the browser, navigates to the URL, interacts with the form elements, checks the result, and logs pass or fail, all without a human running a script.

These three layers together: reasoning, context, and action are what separate autonomous testing from traditional test automation. Traditional automation runs what you tell it to run. Autonomous testing decides what to run, figures out how to run it, and interprets what happened.

What Autonomous Testing Tools Can Do Today

Generate Test Cases Automatically

AI can generate test cases from requirements, feature descriptions, or user stories in seconds. A QA engineer who receives a spec for a new checkout feature no longer writes 50 test cases from scratch, they review and adjust 50 AI-generated cases in a fraction of the time.

The cases aren’t perfect. Business logic, edge cases specific to your domain, and anything not in the training context will need human review. But the mechanical work of generating obvious positive and negative scenarios is gone.

🤖 Read also: ChatGPT for Test Case Generation: Complete Guide for QA

Perform Exploratory Testing

This is where autonomous testing platforms show the most promise right now. AI agents can navigate a web interface, click every interactive element, submit forms with valid and invalid data, and report everything unusual they find without being told specifically what to test.

A human tester doing exploratory testing brings intuition and experience. An AI agent brings exhaustiveness and speed. It will test every input combination in a dropdown that a human would skip, and it doesn’t get tired at hour three.

Maintain Tests When the Application Changes

Traditional test automation breaks when the application changes. A button gets renamed, a selector shifts, an API endpoint changes its response format, suddenly dozens of tests fail not because of bugs but because the scripts are stale.

Autonomous testing tools can detect these breaking changes and update test scripts automatically. The agent sees that the selector no longer matches, finds the correct element by context, updates the test, and reruns it. This alone reduces one of the largest ongoing costs of test automation.

Analyze Results and Identify Flaky Tests

Not every failing test is a bug. Some tests fail intermittently due to timing issues, environment flakiness, or network variability. Identifying flaky tests manually requires pattern recognition across many runs, tedious and easy to miss.

AI can flag a test as likely flaky after observing it fail without consistent reproduction, saving QA engineers from investigating non-issues. It can also analyze failure messages, stack traces, and screenshots to classify what type of failure occurred and suggest the likely cause before a human looks at it. The output feeds directly into test reports that stakeholders can read without needing to understand the underlying test infrastructure.

Where Autonomous Testing Doesn’t Work Yet

Honest assessment matters here. Several things autonomous testing is not yet capable of doing well:

- Fully replacing judgment about what matters. An AI can generate 200 test scenarios for a payment feature. Deciding which 40 actually need to be in the regression suite, based on business risk, user impact, and team capacity still requires a human. The AI doesn’t know that your payment processor has a known edge case, or that your biggest customer uses a specific rarely-tested flow.

- End-to-end testing across complex distributed systems. Testing that spans multiple microservices, third-party APIs, and stateful data flows is hard to fully automate even with traditional tools. System integration testing in these environments helps significantly but still needs human orchestration of the overall strategy.

- Testing without human-defined acceptance criteria. The AI generates tests, but it generates them against some definition of “correct.” That definition of what the application is supposed to do still comes from humans. Without clear requirements, autonomous test generation produces tests that reflect the current behavior rather than the intended behavior. That’s circular.

- Performance and load testing. Autonomous testing today focuses mostly on functional testing does the application behave correctly? Performance testing asks whether it performs correctly under load, which requires different tooling and different success criteria that AI models currently don’t handle autonomously.

Autonomous Testing vs. Traditional Test Automation

Traditional test automation is not going away. The testing tools you already use Playwright, Selenium, Cypress remain the execution layer in most autonomous testing architectures. What changes is the layer above them: who writes the scripts, who maintains them, and who analyzes the results.

| Traditional Test Automation | Autonomous Testing | |

| Test creation | Written manually by QA engineers | AI generates from requirements or exploration |

| Test maintenance | Manual updates when app changes | AI detects changes and updates tests |

| Execution | Runs fixed scripts on a schedule | Agents execute dynamically, adapting to the interface |

| Failure analysis | Engineers investigate failures manually | AI classifies failures and identifies likely causes |

| Exploratory coverage | Defined explicitly in advance | AI explores what wasn’t explicitly defined |

| Human role | Script writer and executor | Architect and reviewer |

How to Implement Autonomous Testing

Start With One Use Case

Don’t try to make your entire testing process autonomous at once. Pick one area where the current approach is clearly painful, typically test maintenance (scripts breaking from UI changes) or initial test generation for new features and build there first.

Connect AI to Your Actual Documentation

Autonomous testing without context produces generic tests. The RAG layer is what makes it useful. Before you can generate meaningful tests for your application, the AI needs access to your requirements, your API specs, your Jira tickets, your existing test suite. Set up that knowledge base before running generation at scale. Teams already using BDD test cases have an advantage here, structured Gherkin scenarios are clean input for AI generation.

Integrate With Your CI/CD Pipeline

Autonomous testing delivers continuous value when it runs automatically on every code change. That means CI/CD integration is how autonomous testing becomes part of the development cycle rather than a separate QA phase. Connecting your autonomous testing platform to GitHub Actions, GitLab CI, or Jenkins puts test execution where it belongs: triggered by code changes, not scheduled by a person. Pair this with quality gates to block deployments when AI-detected failures cross a defined threshold.

Use a Test Management System to Organize the Output

AI can generate hundreds of test cases quickly. Without organization, that becomes noise. A test management platform tracks what tests exist, which ran, what failed, and what’s covered, giving your QA team the visibility to make decisions about the output AI produces. This is how autonomous test generation stays manageable rather than overwhelming.

Keep Humans in the Decision Loop

Autonomous testing changes how QA engineers spend their time, not whether they’re needed. The practical implementation looks like this: AI drafts, humans review. AI executes, humans interpret ambiguous failures. AI generates coverage reports, humans decide whether the coverage is adequate. That division works. Removing humans from the loop entirely doesn’t.

The Role of QA Engineers in Autonomous Testing

The QA engineer’s job in an autonomous testing environment is closer to an architect than a script writer. The practical skills that matter are shifting, a topic covered in depth in roles and responsibilities in a software testing team . High value now:

- Prompt engineering — knowing how to instruct AI systems to generate useful tests

- Test strategy — deciding what types of testing matter for a given system and what acceptable coverage looks like

- Failure triage — reviewing AI-generated failure reports and making judgment calls about what needs human investigation

- Knowledge base management — keeping the documentation that feeds RAG accurate and current

Lower value than before:

- Manual script writing for well-understood test scenarios

- Manually maintaining selectors and test scripts as UI changes

- Manually analyzing large volumes of test results

QA teams that adopt autonomous testing well are handling more test coverage with the same headcount, running tests more frequently, and catching issues earlier in the testing cycle . The job gets more strategic.

Benefits of Autonomous Testing

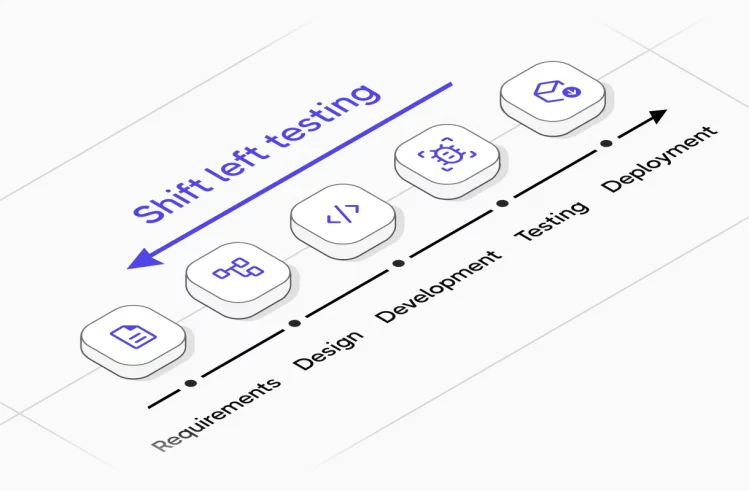

- Faster coverage of new features. When a developer opens a pull request, autonomous testing can generate test cases for the new functionality and run them before a human QA engineer reviews the PR. Defects surface in hours rather than days.

- Consistent regression coverage. AI doesn’t skip tests when a sprint is rushed. The regression testing that gets skipped at the end of every release cycle because there’s not enough time runs every time when it’s autonomous.

- Realistic test data. AI can generate diverse, realistic test data that covers edge cases human testers don’t think to include, unusual input combinations, boundary values, format variations. Manually created test data tends to cluster around obvious cases. Tracking how this affects your software testing quality metrics over time shows whether autonomous generation is actually improving coverage.

- Reduced maintenance cost. Test maintenance is one of the most expensive ongoing costs in traditional automation. Scripts break, engineers fix them, the cycle repeats. Autonomous testing cuts this by having AI handle the maintenance that comes from normal application evolution.

Challenges of Autonomous Testing

- Environment complexity. Autonomous testing needs stable, accessible test environments. A QA team running 50 microservices with complex data dependencies can’t hand that to an AI agent and expect full autonomous coverage. Environment management remains largely a human problem.

- Trust calibration. QA teams new to autonomous testing often either trust the output too much (and miss genuine bugs that AI misclassified) or too little (and recreate manual work alongside the autonomous system). Calibrating how much to rely on AI analysis takes time and experience with a specific system.

- Context freshness. The RAG knowledge base needs to stay current. If your API documentation is six months out of date, the tests AI generates from it will reflect the old API. Someone owns keeping that documentation accurate, that’s a new responsibility autonomous testing creates.

- Initial setup time. Getting an autonomous testing system connected to your CI/CD pipeline, test management platform, issue tracker, and documentation takes time upfront. Teams that approach it as a quick win are usually disappointed. Teams that treat it as infrastructure investment see the returns.

Autonomous Testing With Testomat.io

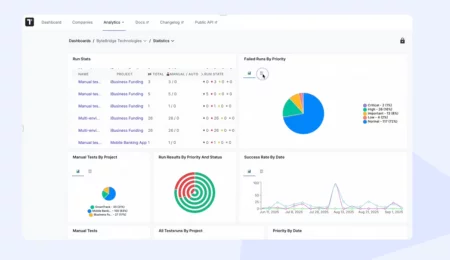

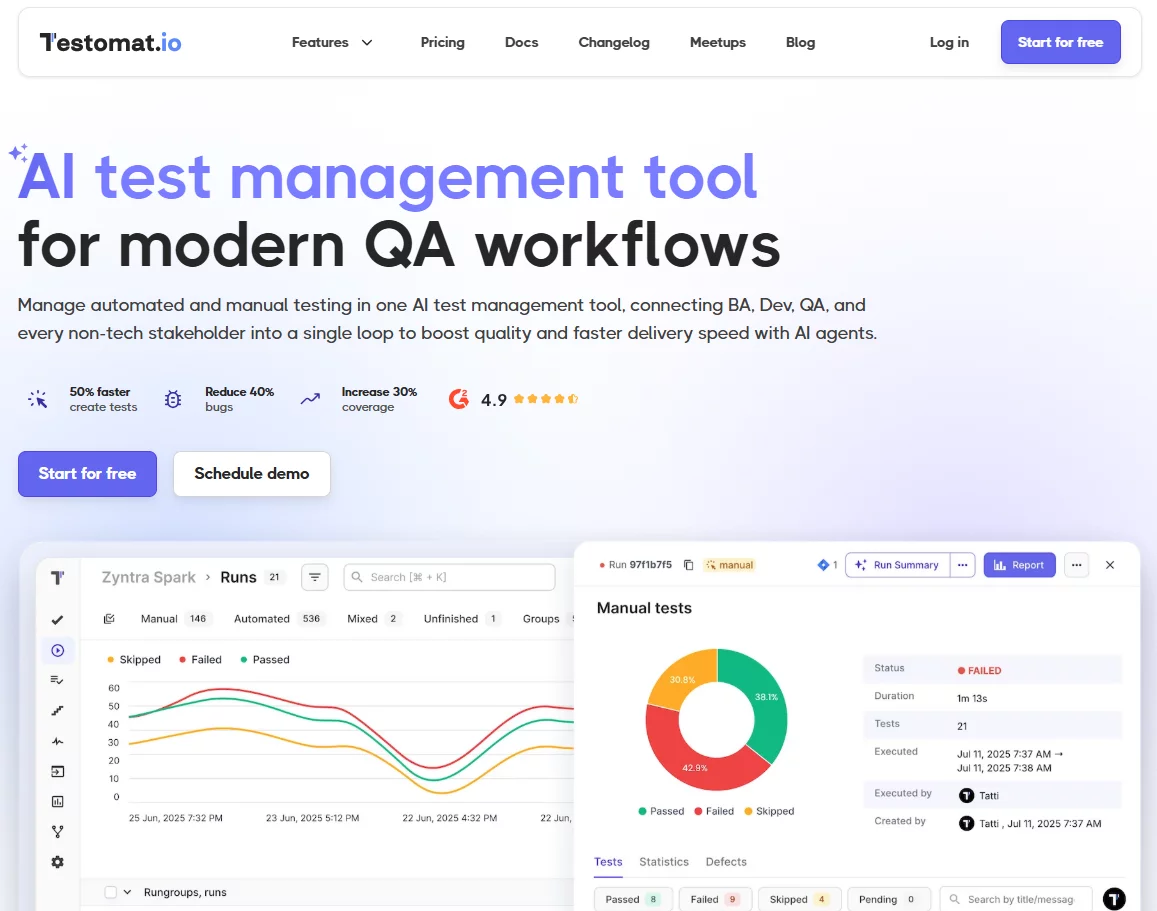

Testomat.io’s test management platform connects to the autonomous testing workflow at the organization layer, where AI-generated tests need to be tracked, managed, and reported on.

When AI tools generate test cases from requirements or exploratory runs, Testomat.io stores them, tracks their execution history, maps them to requirements for coverage analysis, and surfaces analytics that tell QA teams which areas are adequately covered and which aren’t. The heatmap view makes it easy to spot which parts of the application have been tested heavily and which are consistently undertested. The AI generates; Testomat.io organizes and reports.

This matters because autonomous test generation produces volume. Without a management layer, that volume becomes unmanageable. With it, QA teams maintain visibility into what’s being tested, what’s failing, and where coverage gaps remain — even as the tests themselves are generated and maintained by AI.

Testomat.io supports Playwright, Cypress, Selenium, Jest, Cucumber, and other frameworks used in autonomous testing architectures. CI/CD integrations with GitHub Actions, GitLab, Jenkins, and Azure DevOps mean test results feed automatically into the analytics layer without manual reporting.

Autonomous testing is not a future concept, the tools exist, teams are using them, and the results in specific contexts are clear. It’s also not complete. Fully autonomous testing across a complex system with no human oversight isn’t the reality today. What’s real is that AI handles specific parts of the testing process better than humans can at scale, and the QA teams putting that to work are running more thorough testing with less manual effort than they were two years ago.

The path forward is pragmatic: identify where autonomous testing helps most in your specific context, integrate it into your existing process, and build from there.